Two-Face Generalizability Analysis in Thesis Examination Assessment at IAI Nurul Hakim Kediri West Lombok

DOI:

https://doi.org/10.51700/mutaaliyah.v5i2.1162Keywords:

Generalizability Theory, Assessment Consistency, Assessment VariabilityAbstract

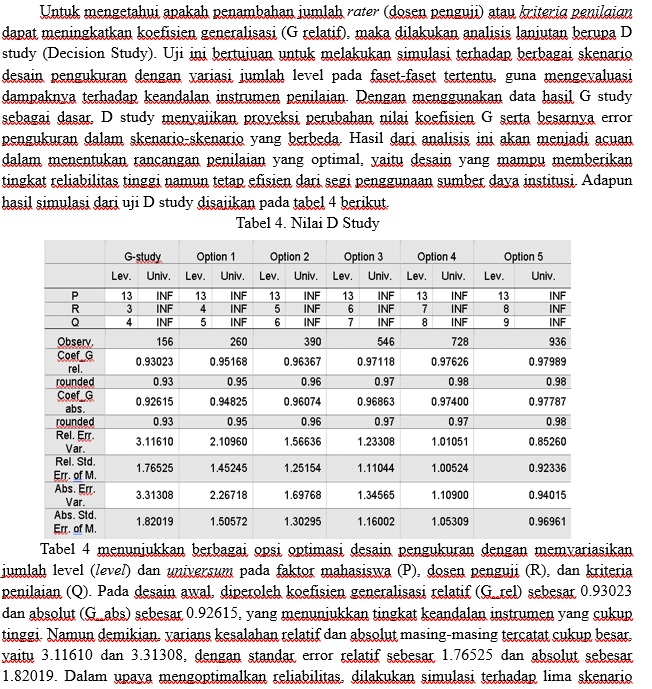

This study aims to evaluate the consistency and fairness of undergraduate thesis assessments at IAI Nurul Hakim Kediri, West Lombok, using Generalizability Theory (G-Theory). Data were collected from the assessments of 13 students by three examiners using four standardized evaluation criteria. Analysis using the EduG software revealed that the largest source of error stemmed from the interaction among persons, raters, and criteria (PRQ). The generalizability coefficient of 0.93 indicates excellent reliability of the assessment instrument. To enhance fairness, efforts should be made to reduce variability in facet interactions. Simulations showed that increasing the number of raters and criteria could improve reliability. While Option 5 yielded the most optimal results, Options 3 and 4 are also worth considering as they offer a balance between reliability and resource efficiency. This study underscores the importance of well-designed assessment systems to ensure fair and consistent undergraduate thesis evaluations.

Downloads

References

Andersen, S. A. W., Nayahangan, L. J., Park, Y. S., & Konge, L. (2021). Use of Generalizability Theory for Exploring Reliability of and Sources of Variance in Assessment of Technical Skills: A Systematic Review and Meta-Analysis. In Academic Medicine (Vol. 96, Issue 11). https://doi.org/10.1097/ACM.0000000000004150

Azizah, A., Wahyuningsih, S., Kusumasari, V., Asmianto, A., & Setiawan, D. (2021). Validity and reliability of mathematical instruments in online learning using the Rasch measurement model at UM lab school. AIP Conference Proceedings, 2330. https://doi.org/10.1063/5.0043356

Bacon, R., Holmes, K., & Palermo, C. (2017). Exploring subjectivity in competency-based assessment judgements of assessors. Nutrition and Dietetics, 74(4). https://doi.org/10.1111/1747-0080.12326

Bauer, J., Capra, S., & Ferguson, M. (2002). Use of the scored Patient-Generated Subjective Global Assessment (PG-SGA) as a nutrition assessment tool in patients with cancer. European Journal of Clinical Nutrition, 56(8). https://doi.org/10.1038/sj.ejcn.1601412

Duerksen, D. R., Laporte, M., & Jeejeebhoy, K. (2021). Evaluation of Nutrition Status Using the Subjective Global Assessment: Malnutrition, Cachexia, and Sarcopenia. In Nutrition in Clinical Practice (Vol. 36, Issue 5). https://doi.org/10.1002/ncp.10613

Gorges, J., Koch, T., Maehler, D. B., & Offerhaus, J. (2017). Same but different? Measurement invariance of the PIAAC motivation-to-learn scale across key socio-demographic groups. Large-Scale Assessments in Education, 5(1). https://doi.org/10.1186/s40536-017-0047-5

Handoyono, N. A. S. (2020). Online Thesis Exam Evaluation Using Zoom Cloud Meeting During the Covid-19 Pandemic. VANOS Journal of Mechanical Engineering Education, 5(2).

Hegde, V., & Shushruth, S. (2022). Evaluation of Student’s Performance in Programming Using Item Response Theory. IEEE International Conference on Data Science and Information System, ICDSIS 2022. https://doi.org/10.1109/ICDSIS55133.2022.9915978

Helmanda, C. M., Novrizal, A. M. N., & Safura, S. (2022). Students� problems in the introduction section of thesis writing. ACCENTIA: Journal of English Language and Education, 2(1). https://doi.org/10.37598/accentia.v2i1.1264

Hsiao, Y.-P. (Amy), van de Watering, G., Heitbrink, M., Vlas, H., & Chiu, M.-S. (2023). Ensuring bachelor’s thesis assessment quality: a case study at one Dutch research university. Higher Education Evaluation and Development. https://doi.org/10.1108/heed-08-2022-0033

Huebner, A., & Lucht, M. (2019). Generalizability theory in R. Practical Assessment, Research and Evaluation, 24(5).

Ilma, A. Z., Adhelacahya, K., & Ekawati, E. Y. (2021). Assessment for Learning Model in Competency Assessment of 21stCentury Student Assisted by Google Classroom. In A. null, A. M., & D. U.A. (Eds.), Journal of Physics: Conference Series (Vol. 1805, Issue 1). IOP Publishing Ltd. https://doi.org/10.1088/1742-6596/1805/1/012005

Kim, S. Y., Malatesta, J. L., & Lee, W. C. (2022). Generalizability theory and applications. In International Encyclopedia of Education: Fourth Edition. https://doi.org/10.1016/B978-0-12-818630-5.10009-0

Li, G., Xie, J., An, L., Hou, G., Jian, H., & Wang, W. (2019). A generalizability analysis of the mobile phone addiction tendency scale for Chinese college students. Frontiers in Psychiatry, 10(APR). https://doi.org/10.3389/fpsyt.2019.00241

Moore, L. J., Freeman, P., Hase, A., Solomon-Moore, E., & Arnold, R. (2019). How consistent are challenge and threat evaluations? A generalizability analysis. Frontiers in Psychology, 10(JULY). https://doi.org/10.3389/fpsyg.2019.01778

Prabhavathy, P. (2023). Kirkpatrick’s Model Evaluation in Business English Training With The Humanistic Approach- An Overview. Global Research Journal, 2(2). https://doi.org/10.57259/grj1068

Rashid, S. M., & McGuinness, D. L. (2018). Creating and using an education standards ontology to improve education. CEUR Workshop Proceedings, 2182. https://www.scopus.com/inward/record.uri?eid=2-s2.0-85053242084&partnerID=40&md5=b17f6ed4efa58c708912feb72ba76fcd

Robertson, S. E., Steingrimsson, J. A., Joyce, N. R., Stuart, E. A., & Dahabreh, I. J. (2024). Estimating Subgroup Effects in Generalizability and Transportability Analyses. American Journal of Epidemiology, 193(1). https://doi.org/10.1093/aje/kwac036

Sebhatu, A., & Wennberg, K. (2023). Institutional Pressure and Failure Dynamics in the Swedish Voucher School Sector. Scandinavian Journal of Public Administration, 21(3). https://doi.org/10.58235/sjpa.v21i3.11566

Simion, A. (2023). The impact of socio-emotional learning (SEL) on academic evaluation in higher education. Educatia 21, 24. https://doi.org/10.24193/ed21.2023.24.11

Sturgis, P. W., Marchand, L., Miller, M. D., Xu, W., & Castiglioni, A. (2022). Generalizability Theory and Its Application to Institutional Research. AIR Professional File, Spring 2022. https://doi.org/10.34315/apf1562022

ten Hove, D., Jorgensen, T. D., & van der Ark, L. A. (2021). Interrater Reliability for Multilevel Data: A Generalizability Theory Approach. Psychological Methods, 27(4). https://doi.org/10.1037/met0000391

Thomas, L. J. G., Lee, M. G., Todd, C. S., Lynch, K., Loeb, S., McConnell, S., & Carlis, L. (2022). Navigating Virtual Delivery of Assessments for Head Start Children During the COVID-19 Pandemic. Journal of Early Intervention, 44(2), 151–167. https://doi.org/10.1177/10538151221085942

Tierney, R. D. (2022). Fairness in Educational Testing and Assessment. In Fairness in Educational Testing and Assessment. https://doi.org/10.4324/9781138609877-ree35-1

Van Hooijdonk, M., Mainhard, T., Kroesbergen, E. H., & Van Tartwijk, J. (2022). Examining the assessment of creativity with generalizability theory: An analysis of creative problem solving assessment tasks✰. Thinking Skills and Creativity, 43. https://doi.org/10.1016/j.tsc.2021.100994

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2025 Ahmad Ahmad, Mariano Dos Santos

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

License Terms

Karya dalam Jurnal AL-Muta`aliyah dilisensikan di bawah Lisensi Internasional Creative Commons Attribution-ShareAlike 4.0 (CC BY-SA 4.0) dengan ketentuan sebagai berikut:

1) Pengguna bebas untuk menyalin dan mendistribusikan ulang materi dalam media atau format apa pun, me-remix, memodifikasi, dan mengembangkan materi berdasarkan ketentuan ini.

2) Pengguna harus memberikan penghargaan yang sesuai, menyediakan tautan ke lisensi, dan menunjukkan jika ada perubahan yang dilakukan.

3) Pengguna dapat melakukannya dengan cara yang wajar, tetapi tidak dengan cara yang menunjukkan bahwa pemberi lisensi mendukung pengguna atau penggunaan mereka.